Overview

Starting from scratch in a fast-moving space.

The AI landscape was shifting week to week, with no prior product to build on. The product strategy was to go deep on one high-pain vertical rather than spread thin like existing competitor tools.

Timeline

5 weeks

Role

Founding Product Designer

Responsibilities

Product Strategy

MVP Vision

User-Informed Iterations

Foundational Design System

MVP Vision

User-Informed Iterations

Foundational Design System

The Problem

Pitchbook assembly is repetitive, and there’s no room for error.

M&A analysts spend 20+ hours per deck pulling financials, updating comps, and rebuilding slides as direction shifts. These decks inform decisions involving hundreds of millions of dollars. A single incorrect metric can undermine credibility or influence a strategic decision based on flawed data.

The real challenge wasn’t generating slides faster, it was...

Defining the human–AI interaction model; and designing a system users could trust.

![]()

Design Iterations

The goal was to understand how AI responsibility should be distributed in high-stakes workflows.

Testing three autonomy models with real M&A teams.

The goal was to understand how AI responsibility should be distributed in high-stakes workflows.

1.

Full Autonomy

AI handled everything end-to-end with minimal user involvement. But users couldn't verify sources or reasoning.

Optimized for: Speed

Full Autonomy

AI handled everything end-to-end with minimal user involvement. But users couldn't verify sources or reasoning.

Optimized for: Speed

Broke: Trust

2.

Approval Driven

Every step surfaced for review. But users felt like they were micromanaging the AI.

Optimized for: Verification

Approval Driven

Every step surfaced for review. But users felt like they were micromanaging the AI.

Optimized for: Verification

Broke: Flow

Guided Autonomy

AI leads by default. Users step in only at moments of judgement.

Optimized for: Trust + Speed

Unlocked: Confidence

Results

Guided Autonomy delivered the best balance of speed, control, and confidence.

Full autonomy increased speed but reduced confidence. Approval-driven flows improved verification but disrupted workflow. Teams consistently preferred a model where AI led by default, with clear moments for user intervention.

Guided Autonomy delivered the best balance of speed, control, and confidence.

Full autonomy increased speed but reduced confidence. Approval-driven flows improved verification but disrupted workflow. Teams consistently preferred a model where AI led by default, with clear moments for user intervention.

Insight

Trust comes from control at the right moments.

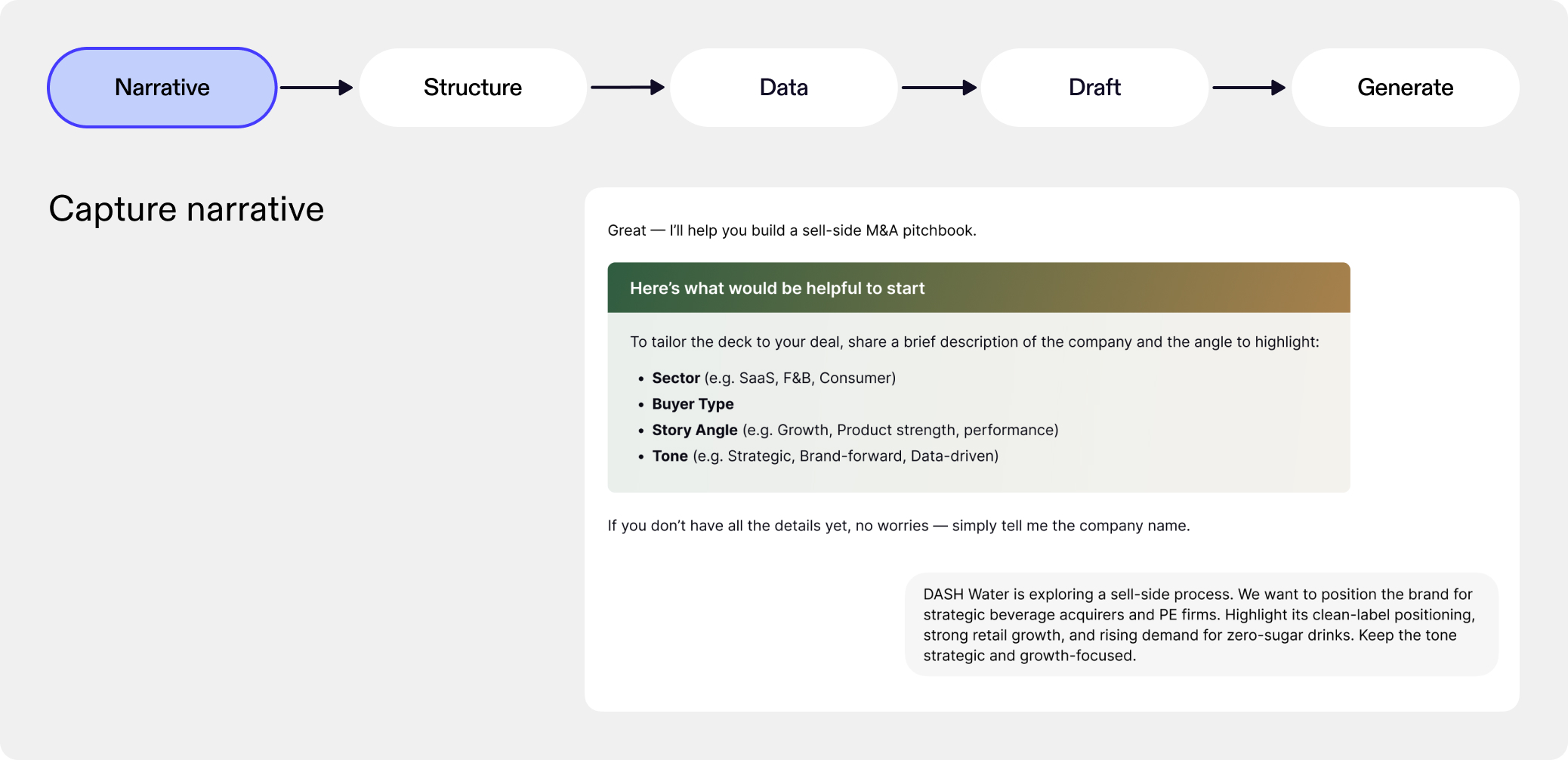

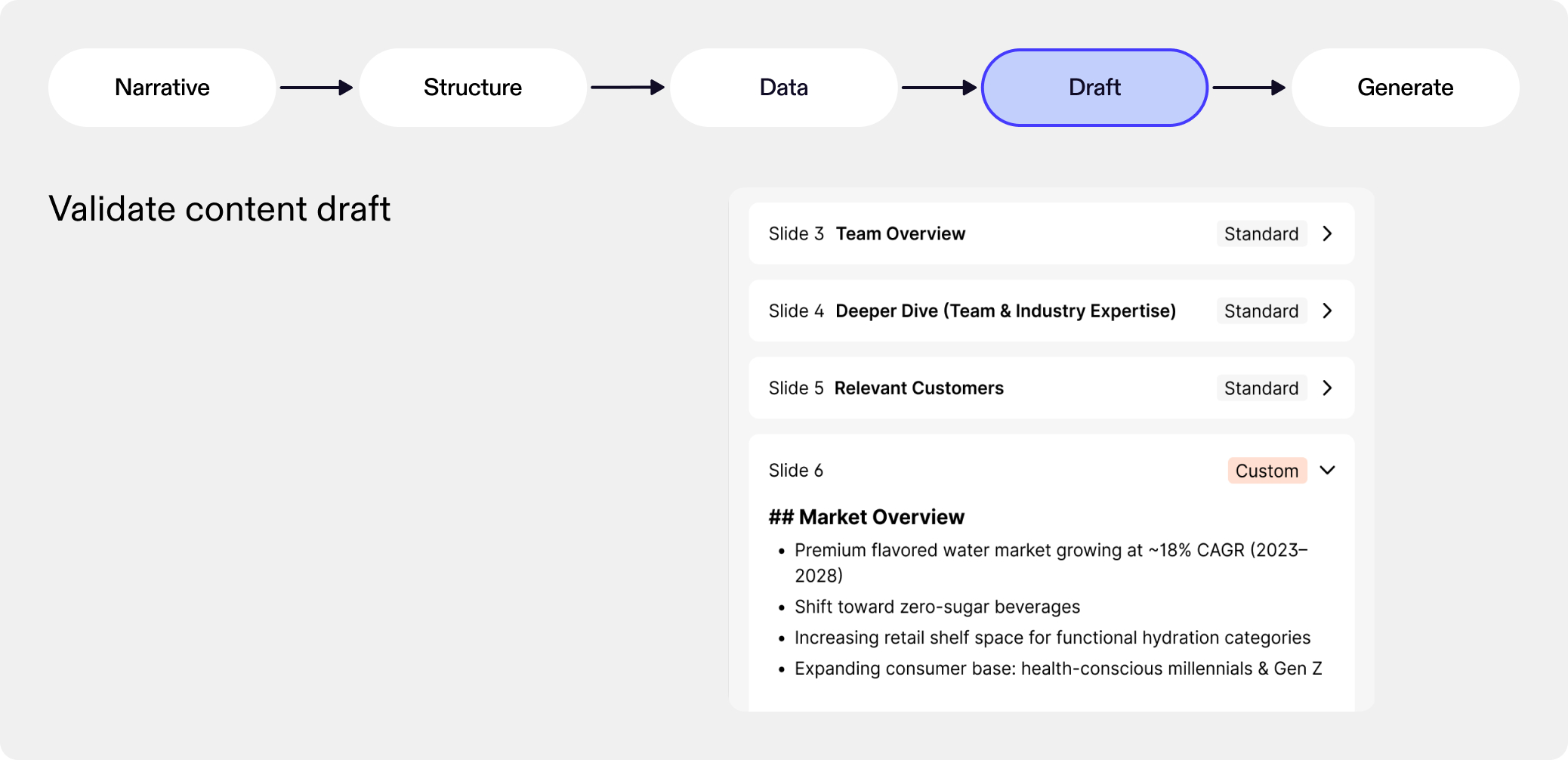

Defining the human-AI workflow

Pitchbooks aren’t created in a single jump, they’re built in stages. We structured the product to follow the natural sequence analysts already use when preparing a deck. By aligning the workflow to this familiar progression, the system fits seamlessly into how teams already think and operate.

Designed around how M&A teams think and work.

Pitchbooks aren’t created in a single jump, they’re built in stages. We structured the product to follow the natural sequence analysts already use when preparing a deck. By aligning the workflow to this familiar progression, the system fits seamlessly into how teams already think and operate.

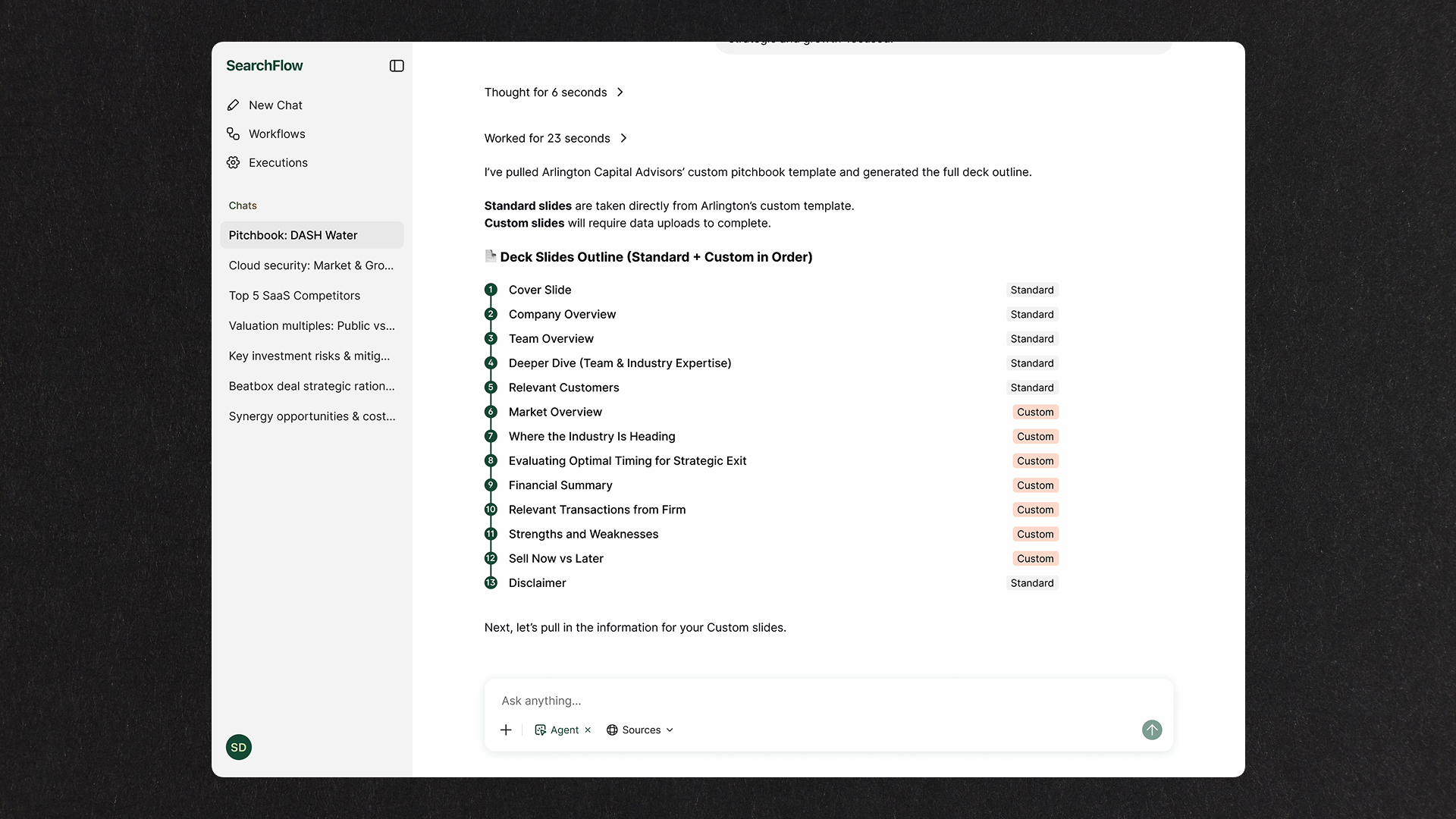

The MVP

Capture intent first:

Searchflow gathers context from the partner brief to clarify direction before any work begins.

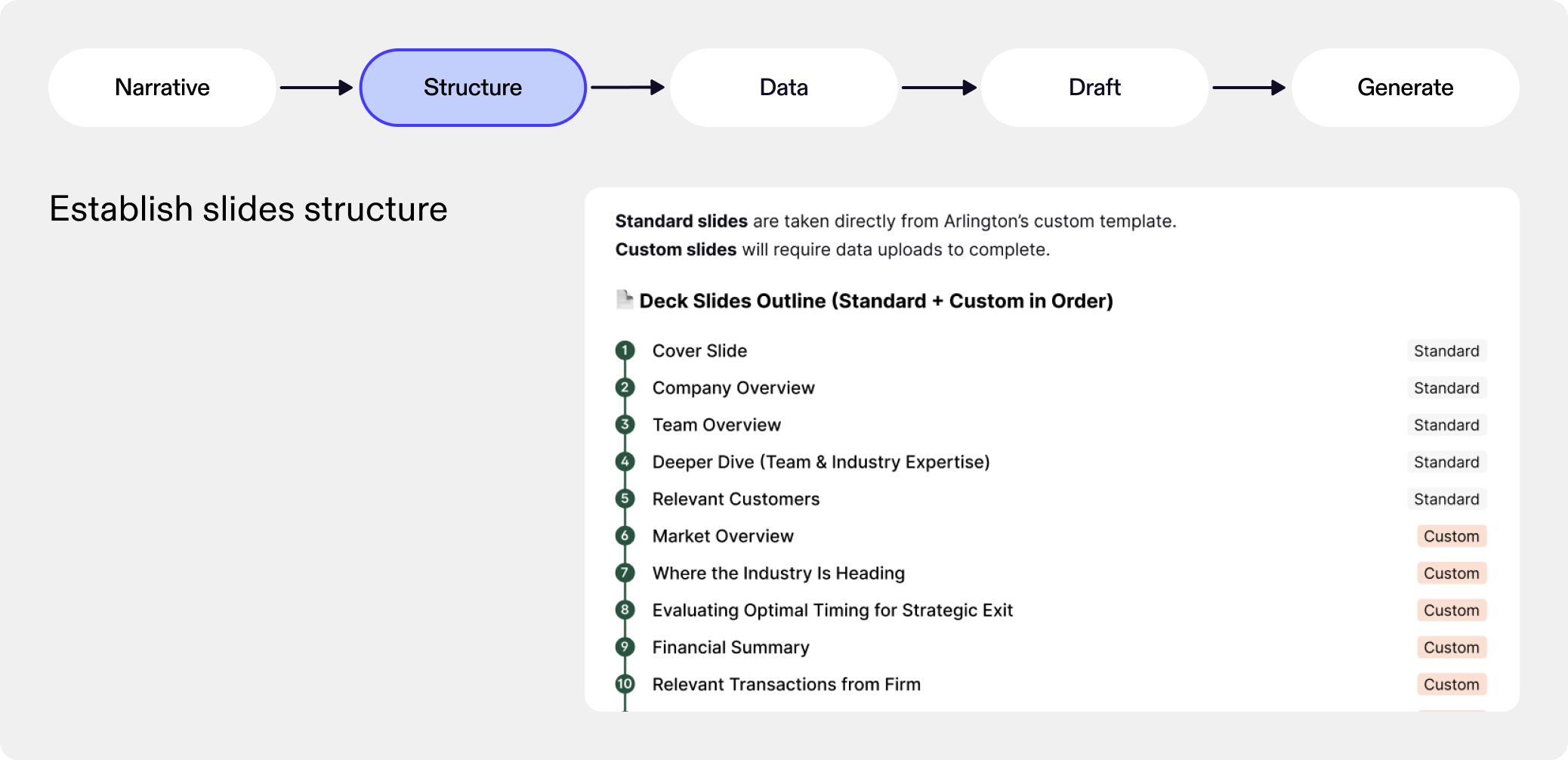

Match the firm's existing pitchbook format:

Searchflow applies the firm's template and maps out which slides need custom work so users know exactly where to focus.

Work with what M&A analysts already have:

Direct integrations weren't feasible for the early product, but users already work with exports. Searchflow parses these and maps them to the slides they support.

Trace every number to its source:

Searchflow generates a text draft with slide-level citations so users can verify where the data comes from before the deck is built.

Built to hand off, not replace:

Editing the generated deck wasn't feasible at our early stage, so it was important to get structure, data, and sources right. At the same time, analysts always do their final polish in PowerPoint, so we designed the output to meet them there.

Searchflow is shaped by four principles.

These guardrails ensure AI supports the workflow without disrupting it.

Outcome

Demo-ready MVP built on a scalable AI workflow framework.

Reflection

We started this from scratch. There was no existing product, and the AI space was moving fast. Every week there was a new model, a new tool, or a new company doing something impressive. It would have been easy to chase whatever felt new.

Instead, we chose to go deep on one specific problem: helping M&A teams build pitchbooks. That meant sitting with a messy, high-stakes workflow instead of building a generic AI tool.

At the same time, I was learning a lot on my own. I spent time reading, testing tools, and studying how other teams were thinking about AI interactions. I didn’t want to just use AI, I wanted to understand how it should behave.

Reshaping how I think about UX

I used to think mostly in terms of systems, flows and screens. But with AI, the harder questions are about behavior: when the system should move, when it should wait, and who’s responsible if something goes wrong.

I saw how small interaction choices could either build confidence or quietly erode it.

Building in a space that was constantly changing.

We started this from scratch. There was no existing product, and the AI space was moving fast. Every week there was a new model, a new tool, or a new company doing something impressive. It would have been easy to chase whatever felt new.

Instead, we chose to go deep on one specific problem: helping M&A teams build pitchbooks. That meant sitting with a messy, high-stakes workflow instead of building a generic AI tool.

At the same time, I was learning a lot on my own. I spent time reading, testing tools, and studying how other teams were thinking about AI interactions. I didn’t want to just use AI, I wanted to understand how it should behave.

Reshaping how I think about UX

I used to think mostly in terms of systems, flows and screens. But with AI, the harder questions are about behavior: when the system should move, when it should wait, and who’s responsible if something goes wrong.

I saw how small interaction choices could either build confidence or quietly erode it.